nextmv-v1.6.1

April 11, 2026What's Changed

- Fixes init for pyinstaller releases by @merschformann in #279

- Release v1.6.1 by @merschformann in #280

nextmv-v1.6.0

April 10, 2026What's Changed

- Binary release of CLI by @merschformann in #268

- Conducts local runs via uv by @merschformann in #275

- Bump version to v1.6.0 by @sebastian-quintero in #277

Changelog

April 10, 2026

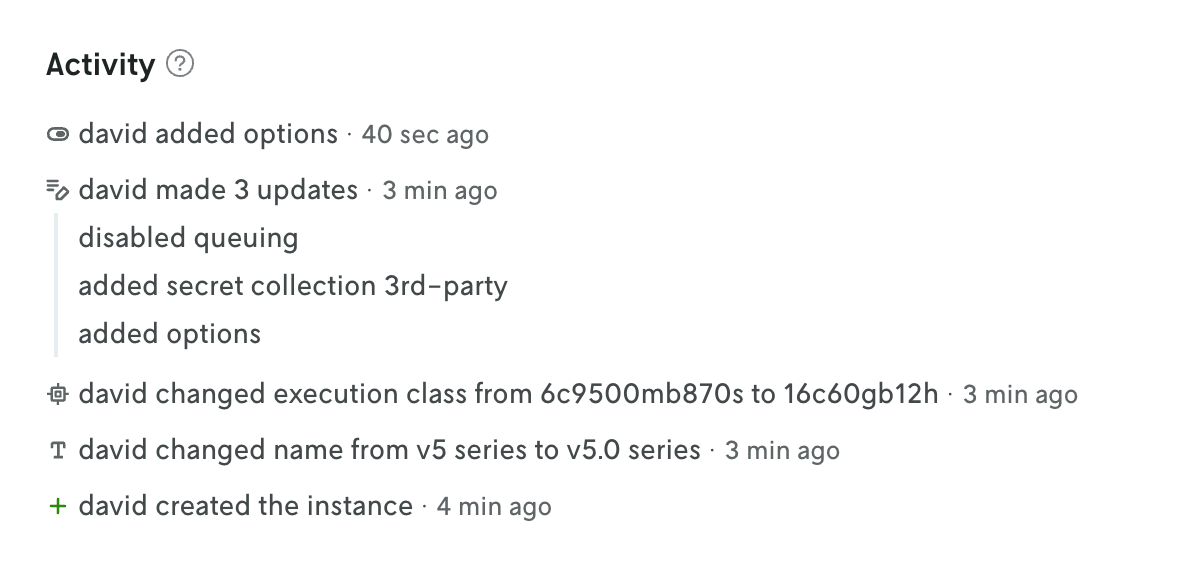

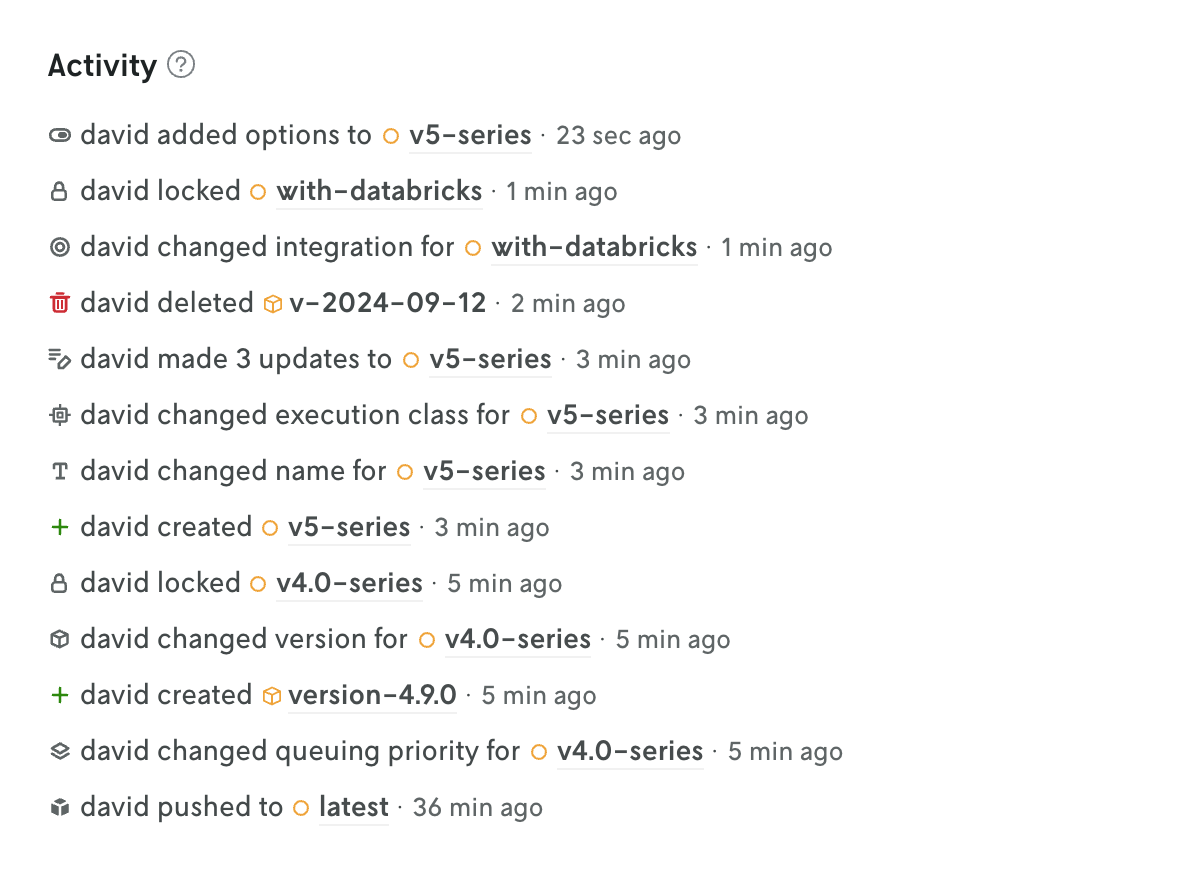

Updates to apps, instances, and versions are now being tracked and displayed in Console.

The changelog is displayed on the entity’s details view at the bottom of the page. The tracked changes include the user who made the change, what was changed, and a timestamp of when the change occured. In many cases the value of what changed is displayed as well.

At the app level, the changes for all the different entities are collected and displayed on the App Overview page (including changes to the app iself, like when a default instance is set for the app). This is a great way to see the overall activity for an app without having to click into different views. Also, you can click on individual changelog entries in this app activity view to see the full changelog entry on the entity’s details view.

You can read more about what data is tracked and when tracking began on the Changlog docs page.

v1.12.4

April 9, 2026What's Changed

- Moves workflows to Go 1.26 by @merschformann in #124

- Fix Distance Limit Constraint Panic by @nmisek in #126

- Simplifies version bump by @merschformann in #127

Full Changelog: v1.12.3...v1.12.4

nextmv-v1.5.0

April 8, 2026What's Changed

- Allows defining local only options by @merschformann in #269

- Upgrades uv lock for security fixes by @merschformann in #271

- Stream logs in real-time during

localexecution for CLI and SDK by @sebastian-quintero in #270 - Release v1.5.0 by @merschformann in #274

v0.7.0

April 7, 2026What's Changed

- Fixes examples by @merschformann in #47

- Adds app bypass by @merschformann in #46

- Release v0.7.0 by @merschformann in #48

Full Changelog: v0.6.1...v0.7.0

nextmv-v1.4.1

April 7, 2026What's Changed

- Make

Clientoptional for anApplication, allowNEXMTV_ENDPOINTby @sebastian-quintero in #267 - Release v1.4.1 by @merschformann in #265

nextmv-v1.4.0

April 1, 2026What's Changed

- Improves managed input run support by @merschformann in #258

- Fix bug in creating an input set solely with run IDs in

nextmv cloud input-set createCLI cmd by @sebastian-quintero in #257 - Upgrades locked dependencies for security by @merschformann in #260

- Add more examples to the

nextmv initcommand by @sebastian-quintero in #259 - MCP server for agents by @ryanjoneil in #237

- Release v1.4.0 bump by @merschformann in #261

nextmv-v1.3.0

March 18, 2026What's Changed

- Onboarding tutorial / initialize a template by @sebastian-quintero in #250

- Avoids copying the manifest file to the output by @sebastian-quintero in #251

- Release v1.3.0 by @sebastian-quintero in #253

nextmv-v1.2.2

March 9, 2026What's Changed

- Revises log func definition by @merschformann in #249

nextmv-v1.2.1

March 9, 2026What's Changed

- Chore/integ test stability improvements by @cowanwalls in #246

- Docs/managed input by @cowanwalls in #247

- Allows custom log callback by @merschformann in #248

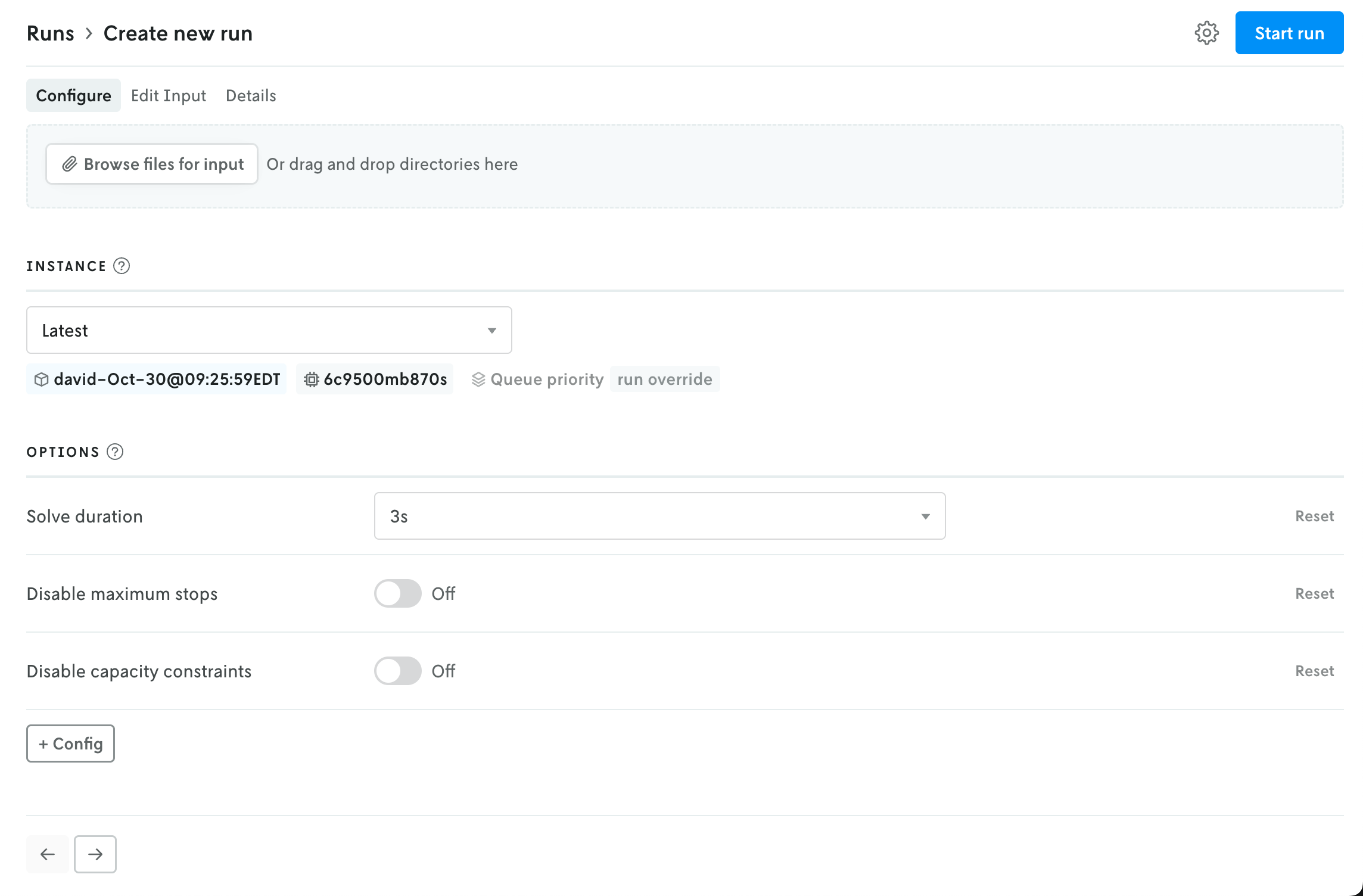

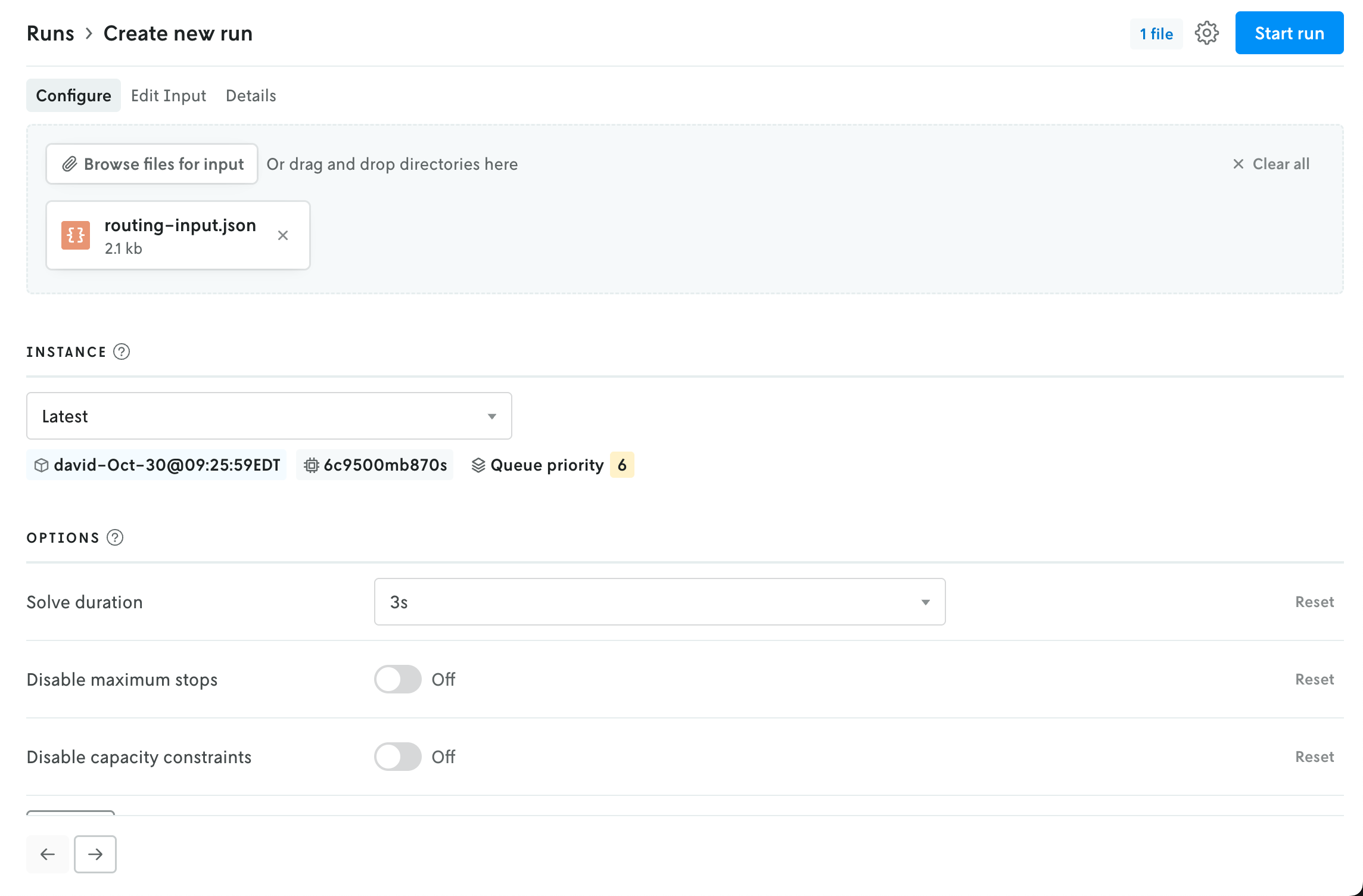

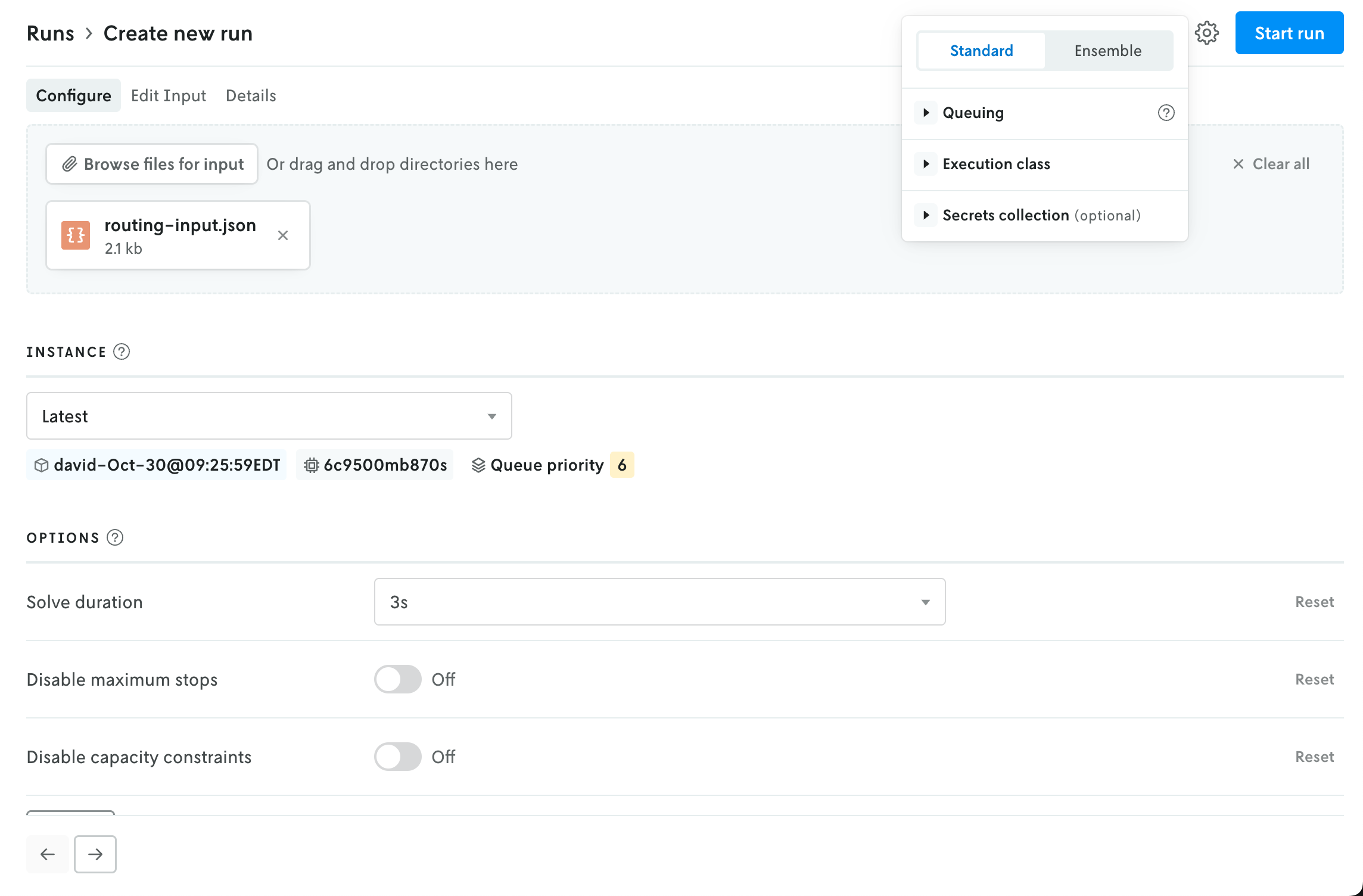

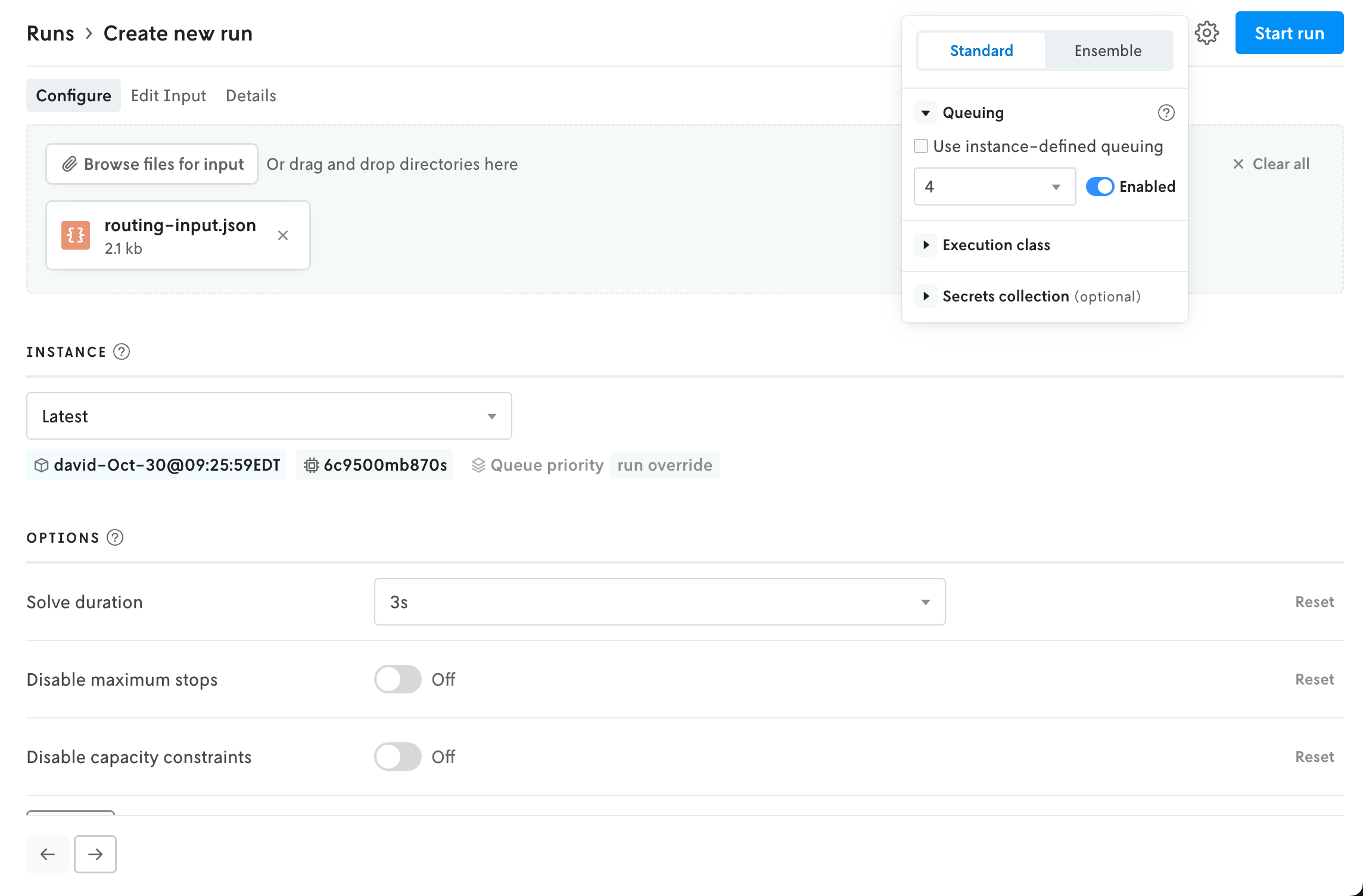

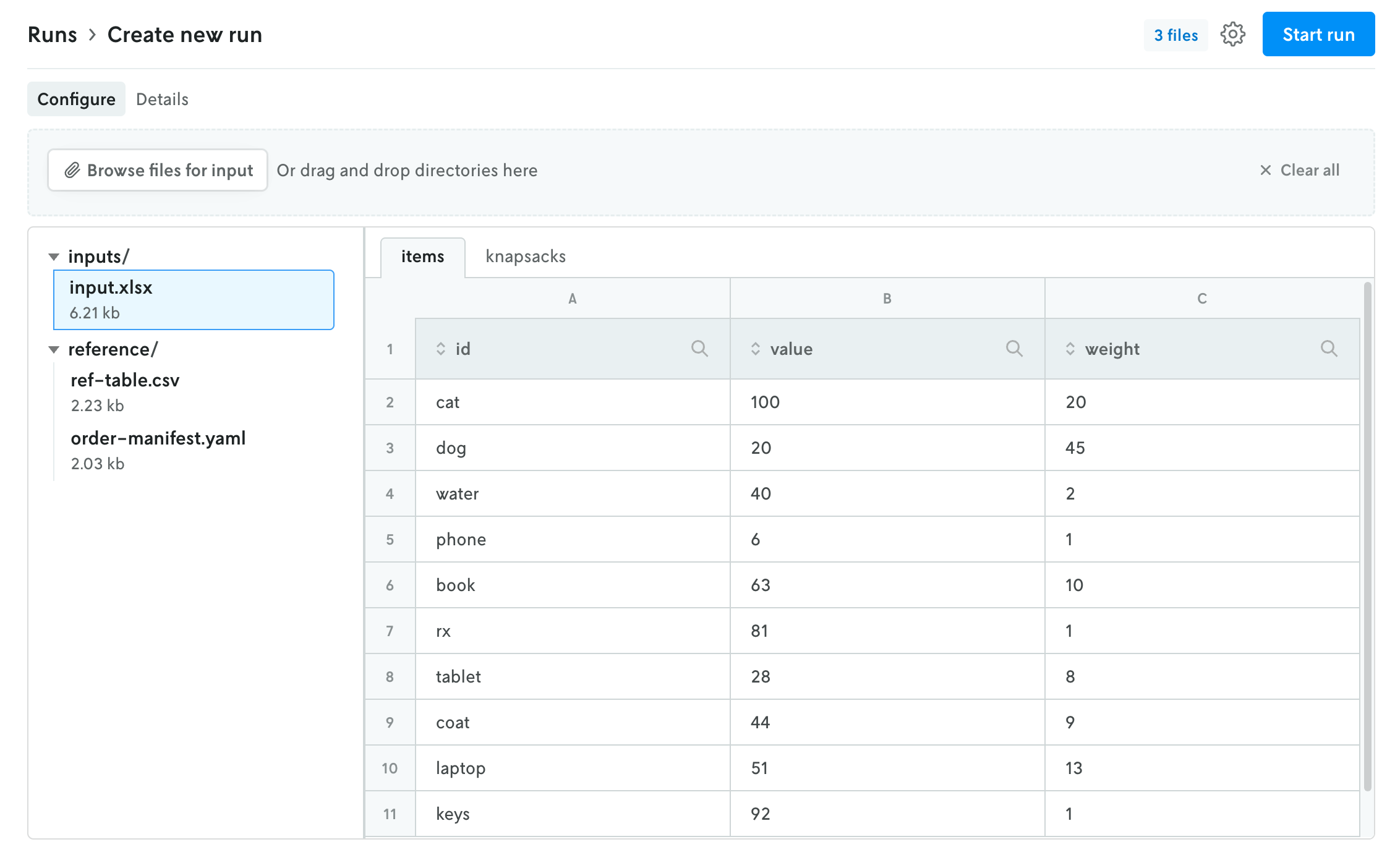

New create run view

January 13, 2026

The create run view has been reorganized into a tabbed view interface to better reflect the flow for creating a run and make space for new features in the future.

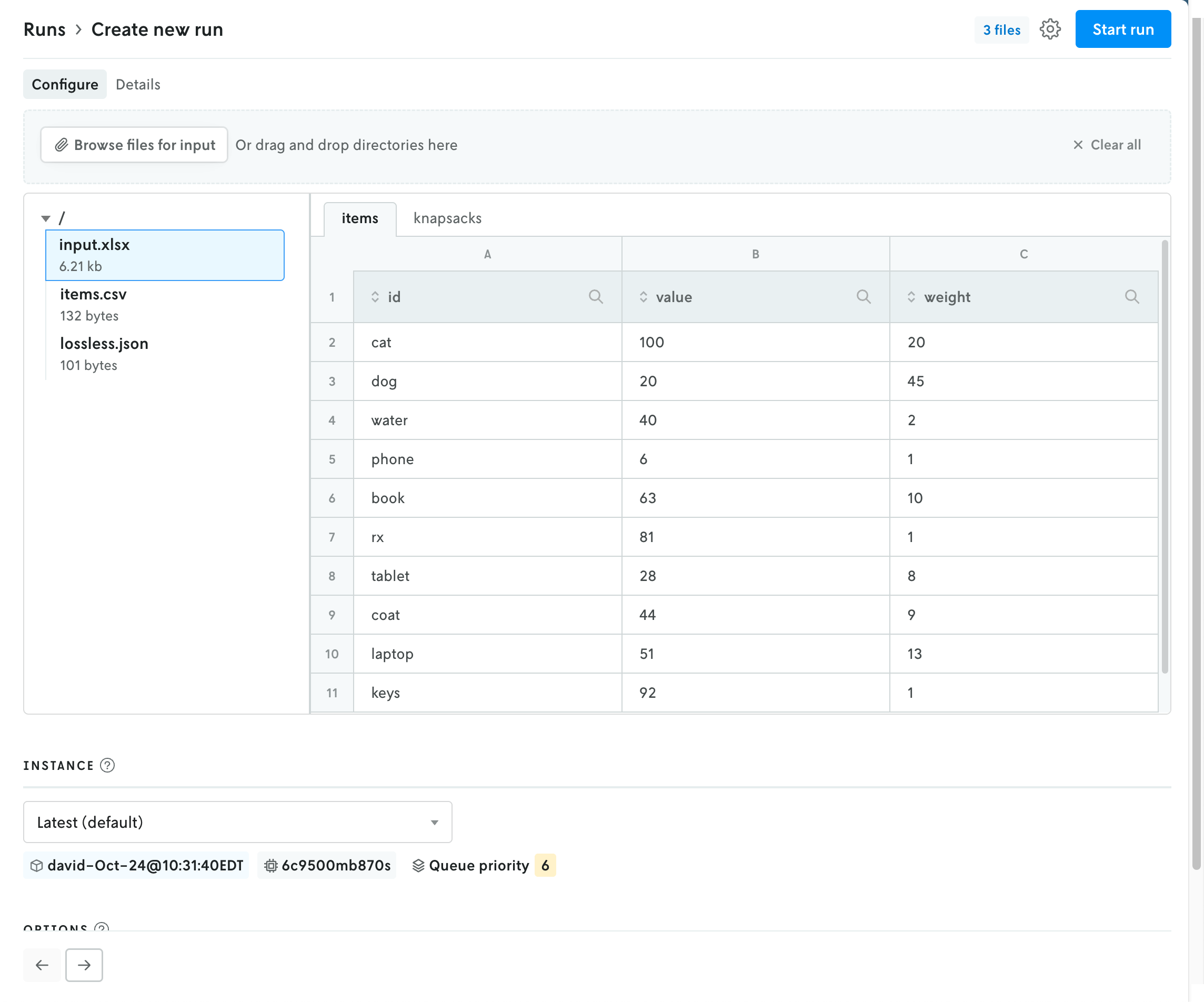

The initial view provides an uploader for the run input and an interface for selecting an instance (note that if your role is Operator then the UI for selecting an Instance is moved to the advanced settings menu). Depending on the format type for the app, the uploaded file(s) will be represented with either a file icon or shown in the multi-file viewer.

How files appear for apps that use JSON format.

How files appear for apps that use JSON format.

How files appear for apps that use multi-file format.

How files appear for apps that use multi-file format.

Updating the instance will refresh the available options for the run in the space below. Note that the Latest instance will be selected by default if no default instance is set for the app.

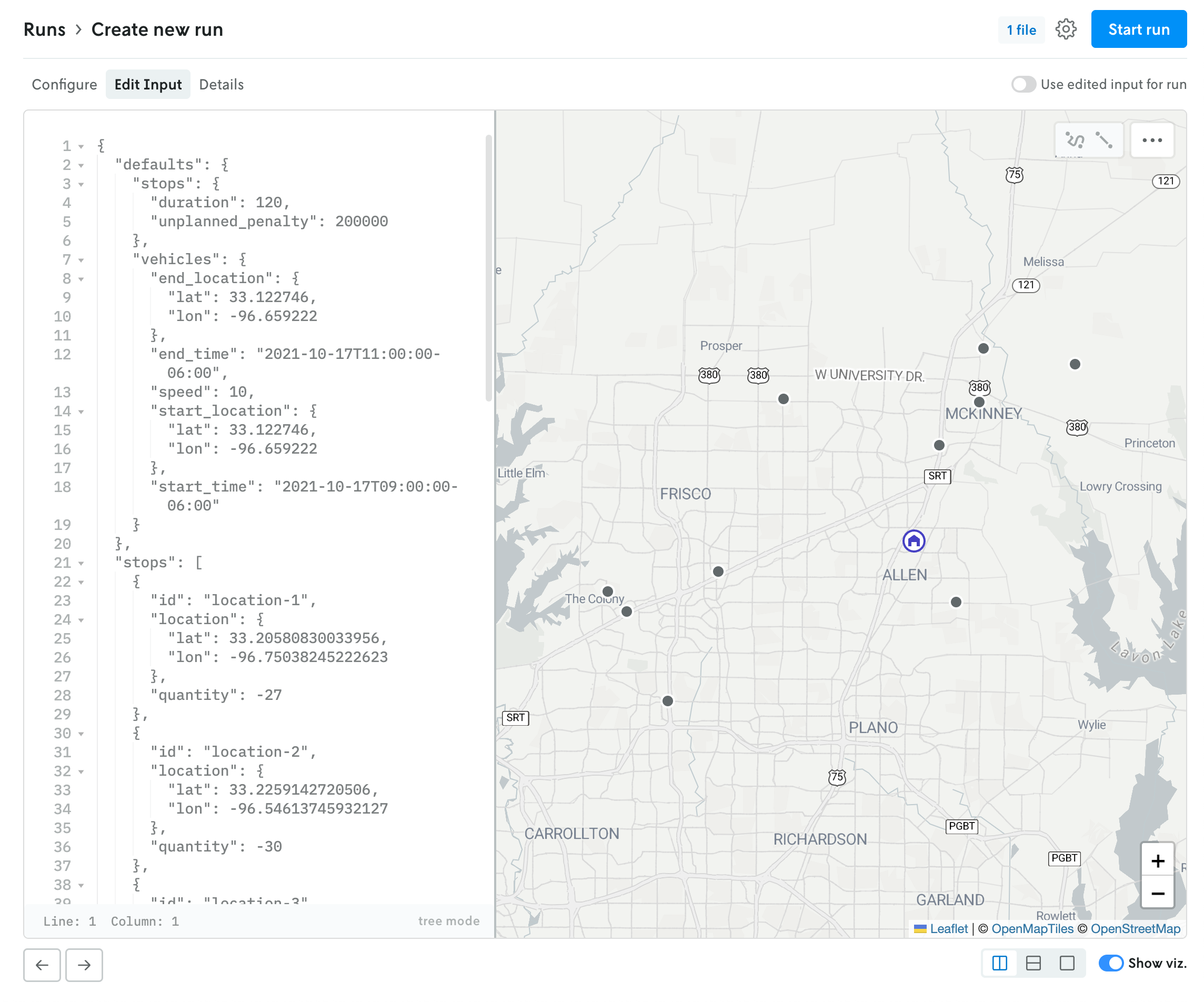

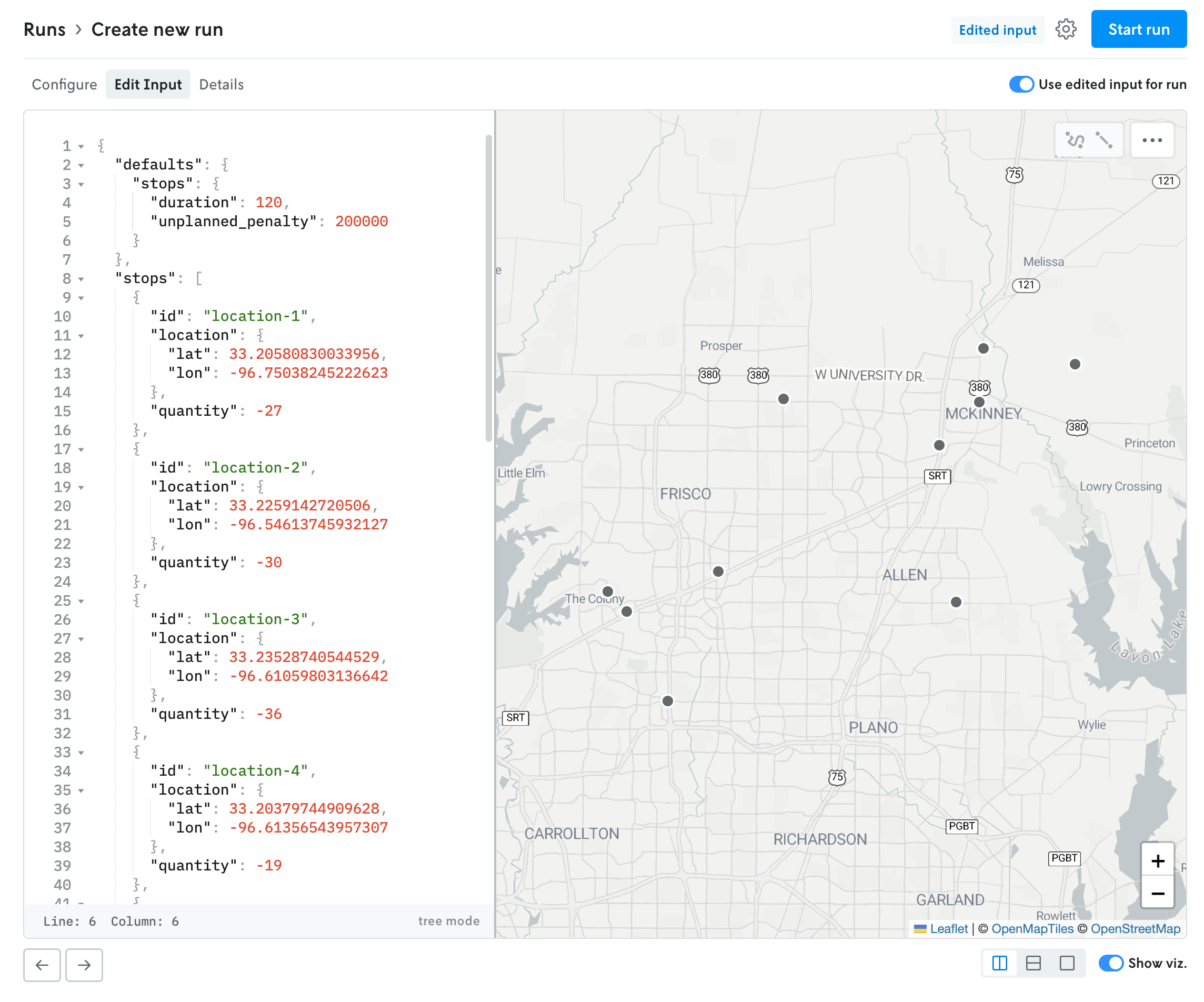

If the format type is JSON, the contents of the uploaded file — if they are below the render threshold of 10 MB — will be loaded into the JSON editor in the edit input view. You can click on the Edit Input tab to view the contents of the uploaded file. If you would like to edit the contents, and use this edited input for the run rather than the uploaded file, you can toggle the “Use edited input for run” option. (You can toggle this back off as well, and the run will use the original uploaded file for input.)

JSON run with use input editor for run toggled off.

JSON run with use input editor for run toggled off.

JSON run with use input editor for run toggled on.

JSON run with use input editor for run toggled on.

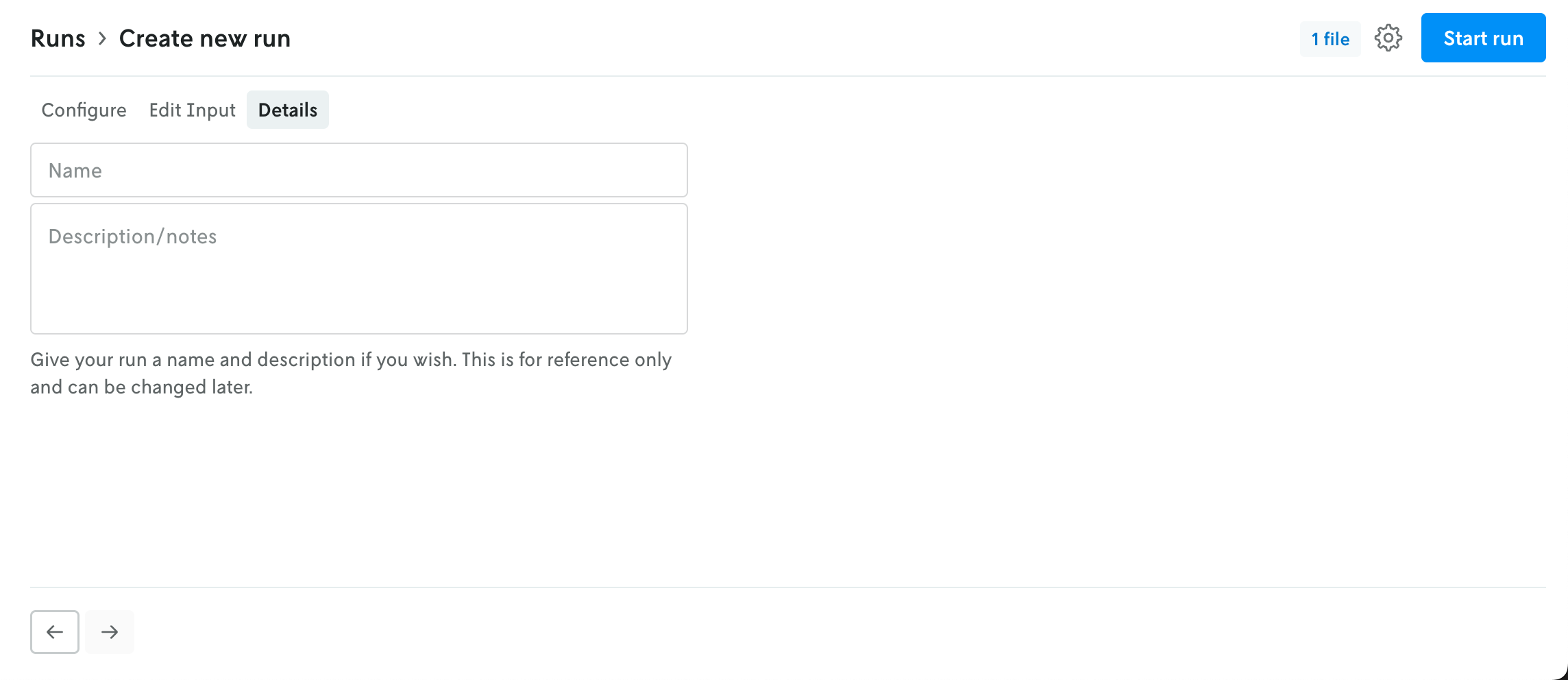

The Details tab allows you to name your run and add a description if you would like. Both of these fields are optional.

In the upper right, an advanced menu collects the rest of the run settings into a single dropdown. This menu contains the UI for adjusting the run queue settings, selecting a specific execution class for the run, and assigning a secrect collection to the run. Note that any run-level setting will override an instance-level setting (this is reflected in the UI as well).

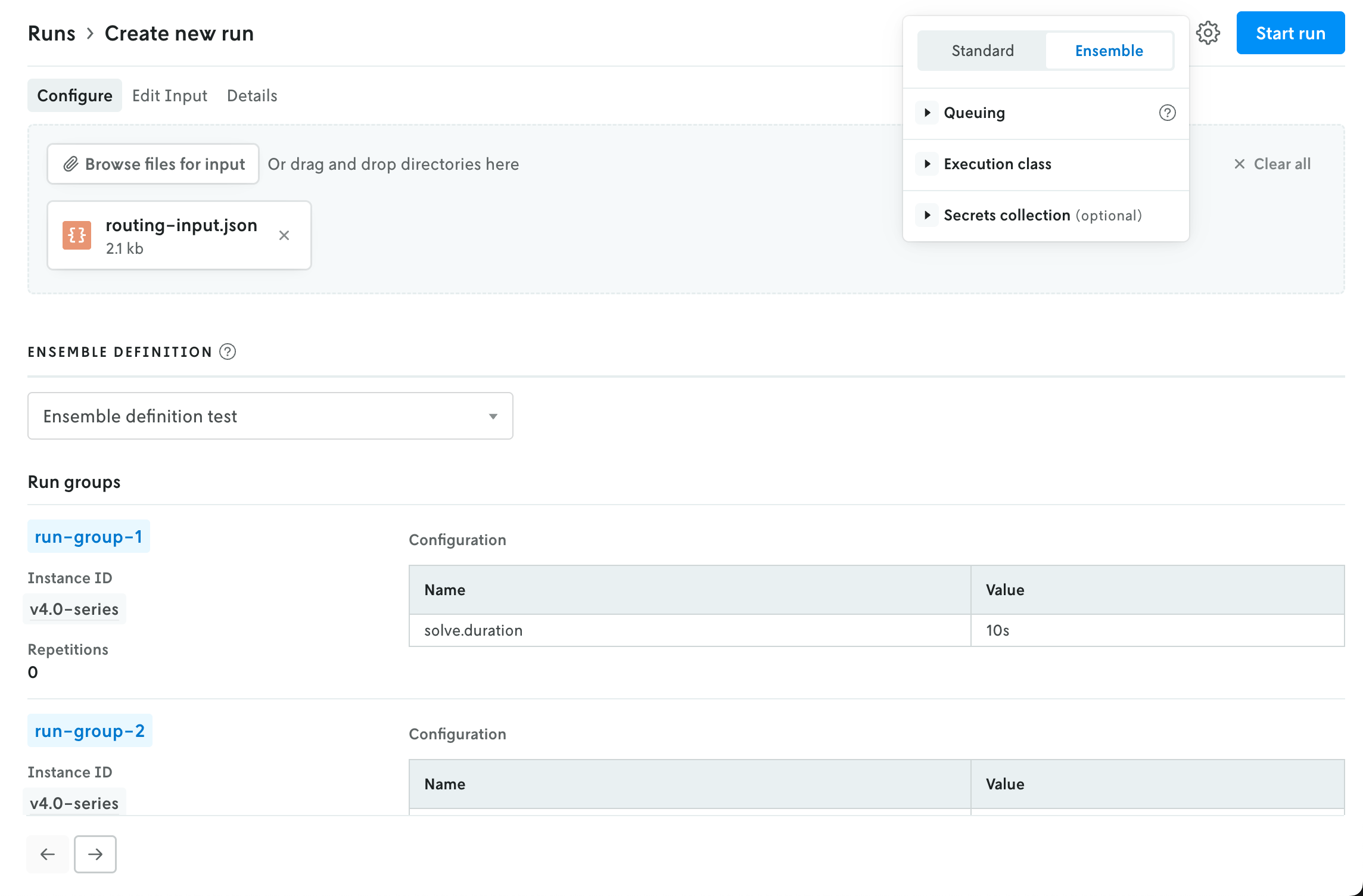

The advanced menu is also where you select the run type. Standard is the default setting; if you select Ensemble then the UI in the main view will be adjusted to show the options for creating an ensemble run (the instance selector is replaced with a dropdown select menu for ensemble definitions).

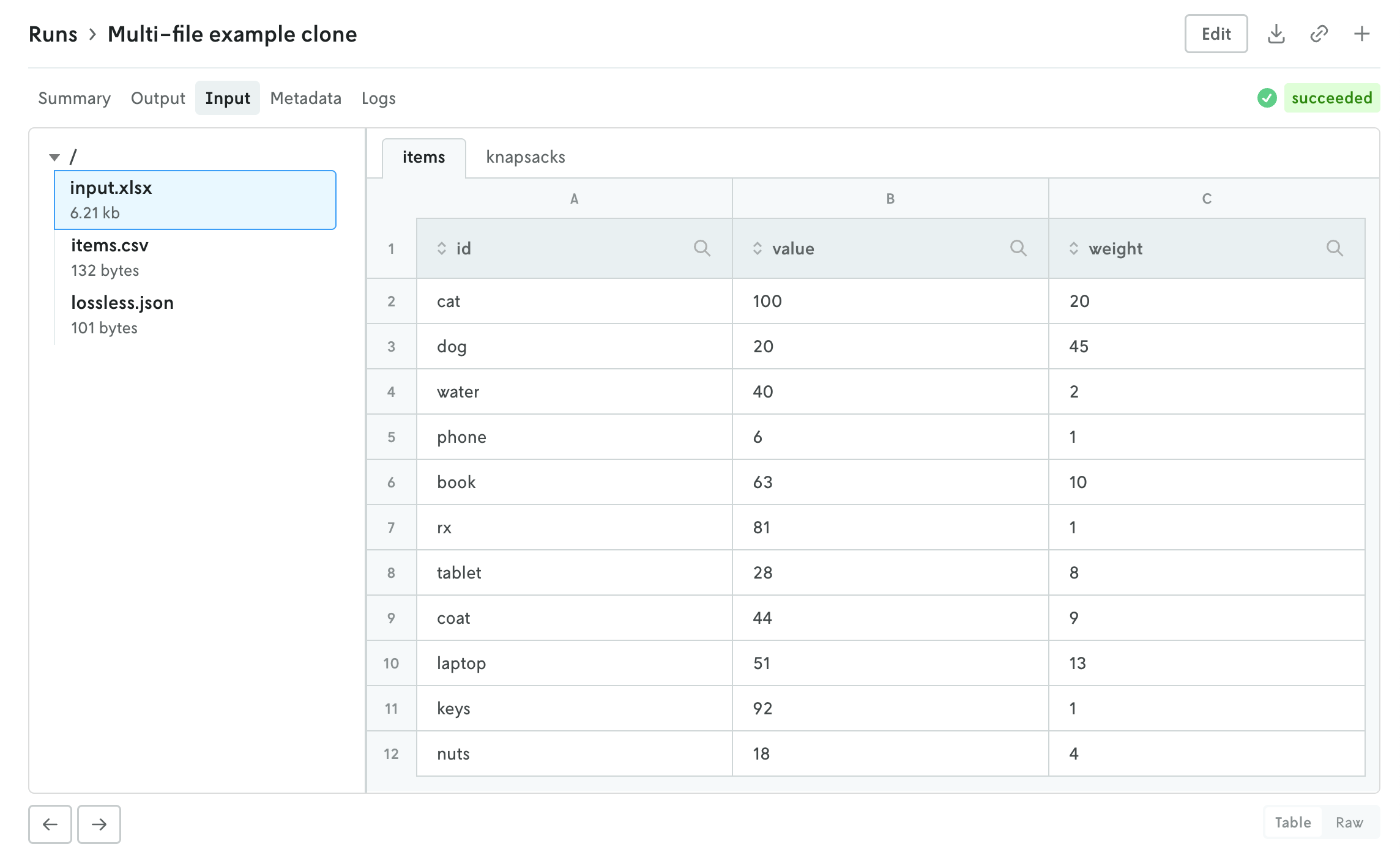

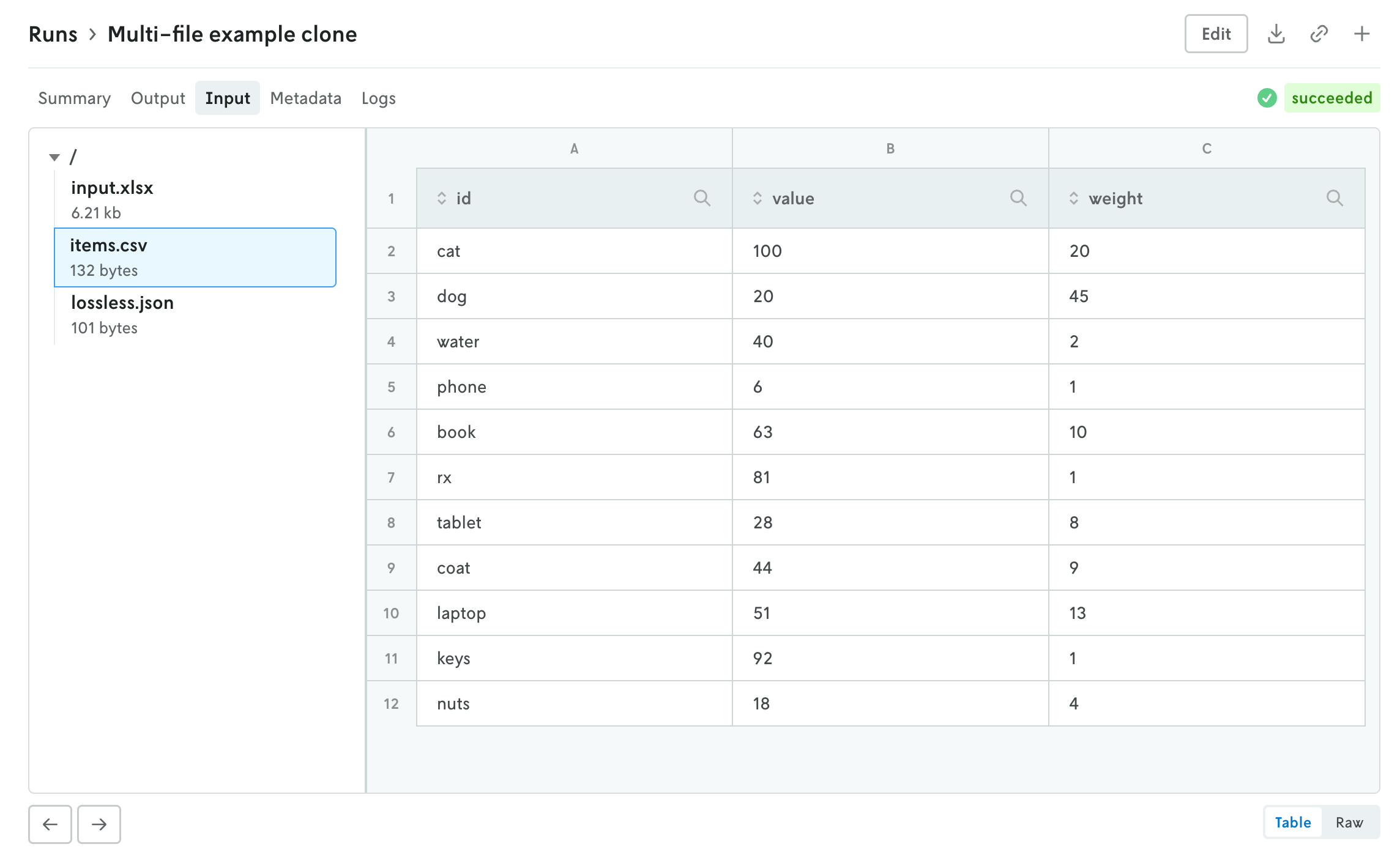

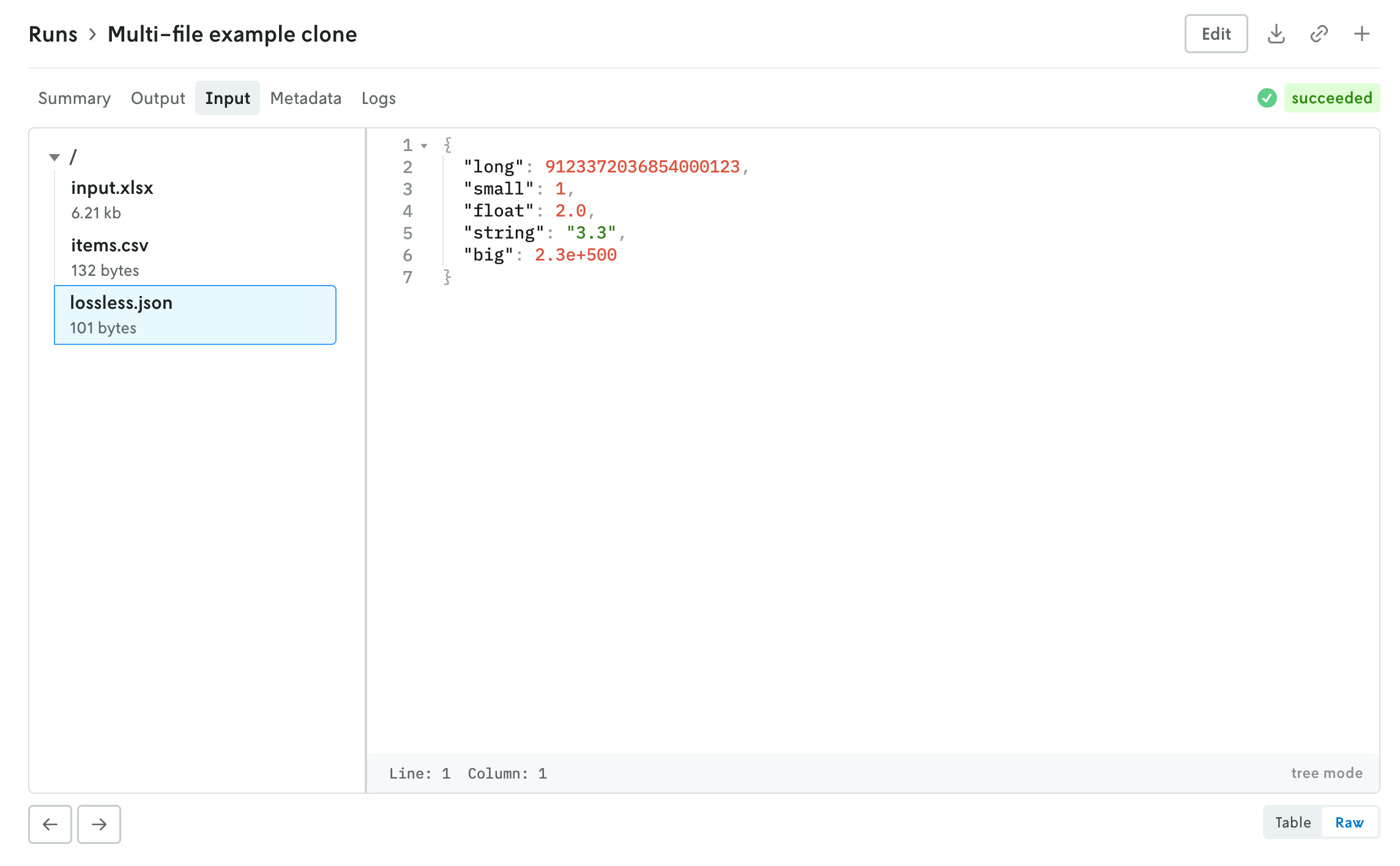

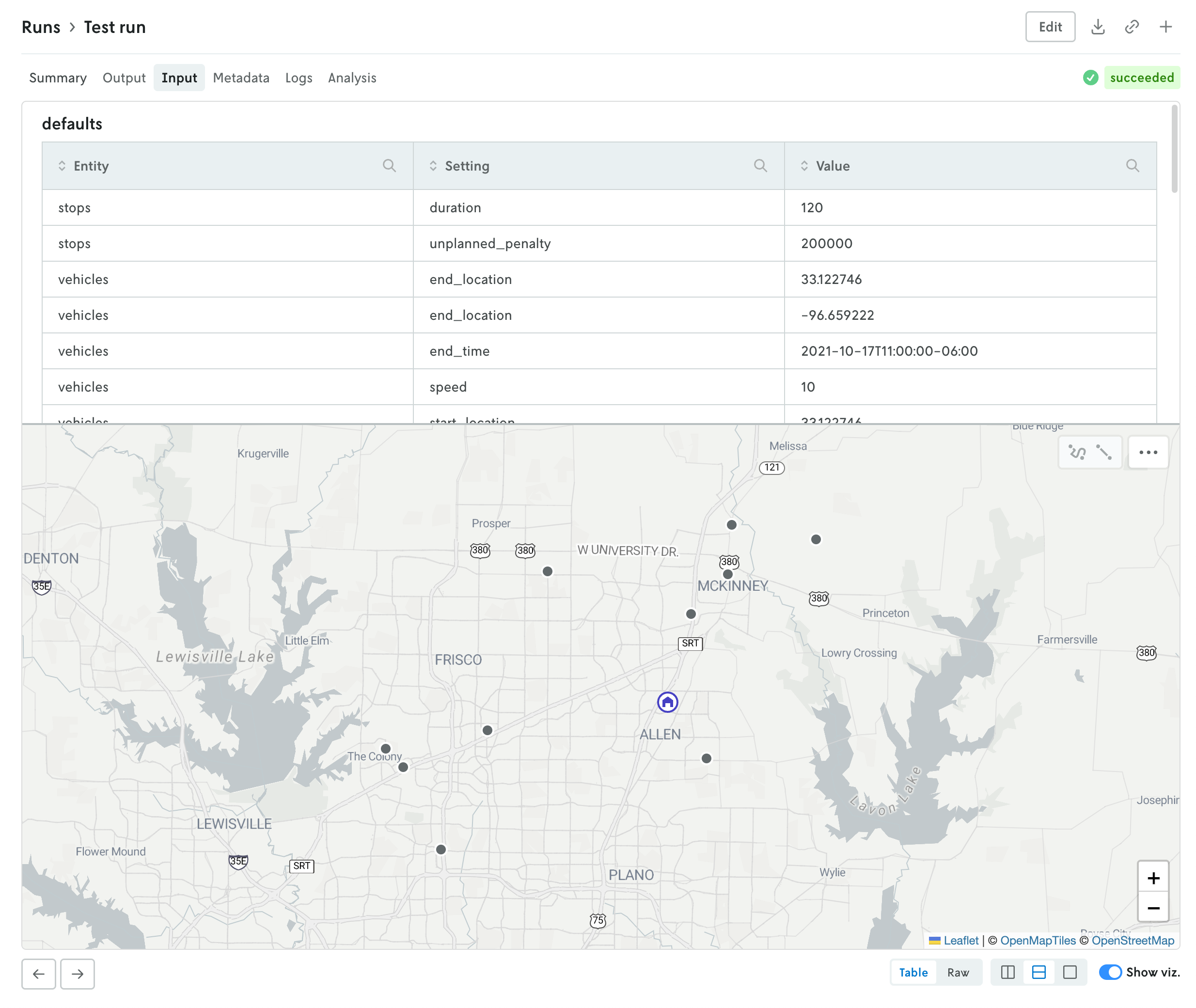

Multi-file viewer

December 4, 2025

Console has a new multi-file viewer that allows you to browse the contents of multi-file input and output. You can view CSV and JSON files in table views or raw views, and you can view XLSX files as well (powered by SheetJS Community Edition). You can view the contents of other types of files as well (.txt, .yaml, etc.).

The left side is the file browser and the right side displays the contents of the file. You can also browse the contents of a multi-file run input on the create run view before you make the run. Note that for multi-file runs you can drag and drop files and directories and the directory structure will be preserved.

The file size threshold for viewing individual files within a multi-file run in Console is 10 MB. Any file over 10 MB will display an interface for downloading the individual file. You can also download the entire input or output file using the standard action buttons in the header of the run details view.

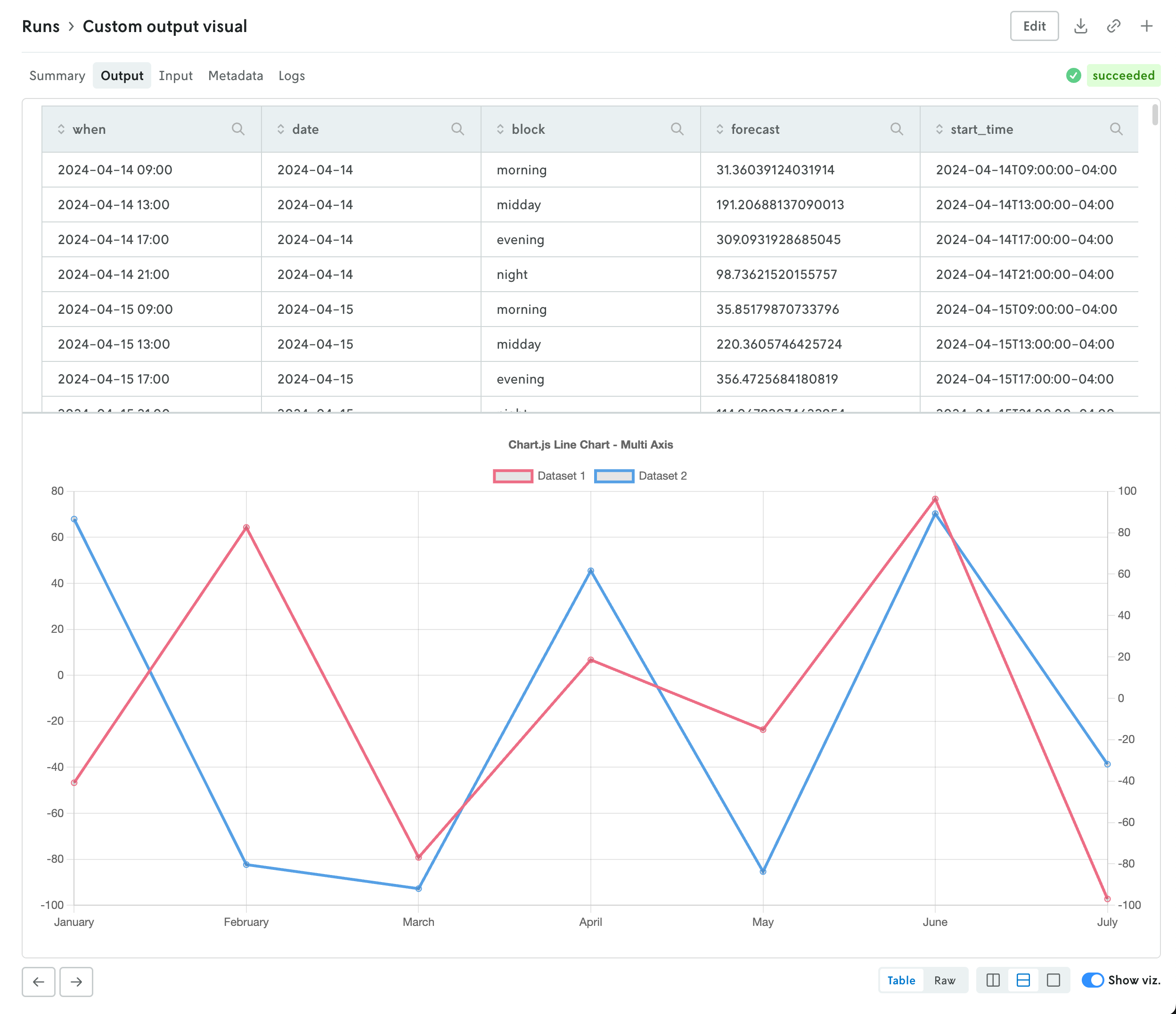

Custom run output visualizations

November 25, 2025

Using custom run visuals, you can now set a custom visual for the run output tab. Before, the output would only have a visual if the output matched set schemas for routing or scheduling apps, now you can set a custom output visual for any type of app.

To assign a custom run visual to the output tab, set the type value to be output-visual:

Your custom visual will have the same controls as the standard routing and scheduling visualizations so you can enable split screen views (or single views) on the data and corresponding visualization. Note that custom visuals will override any standard output visualizations.

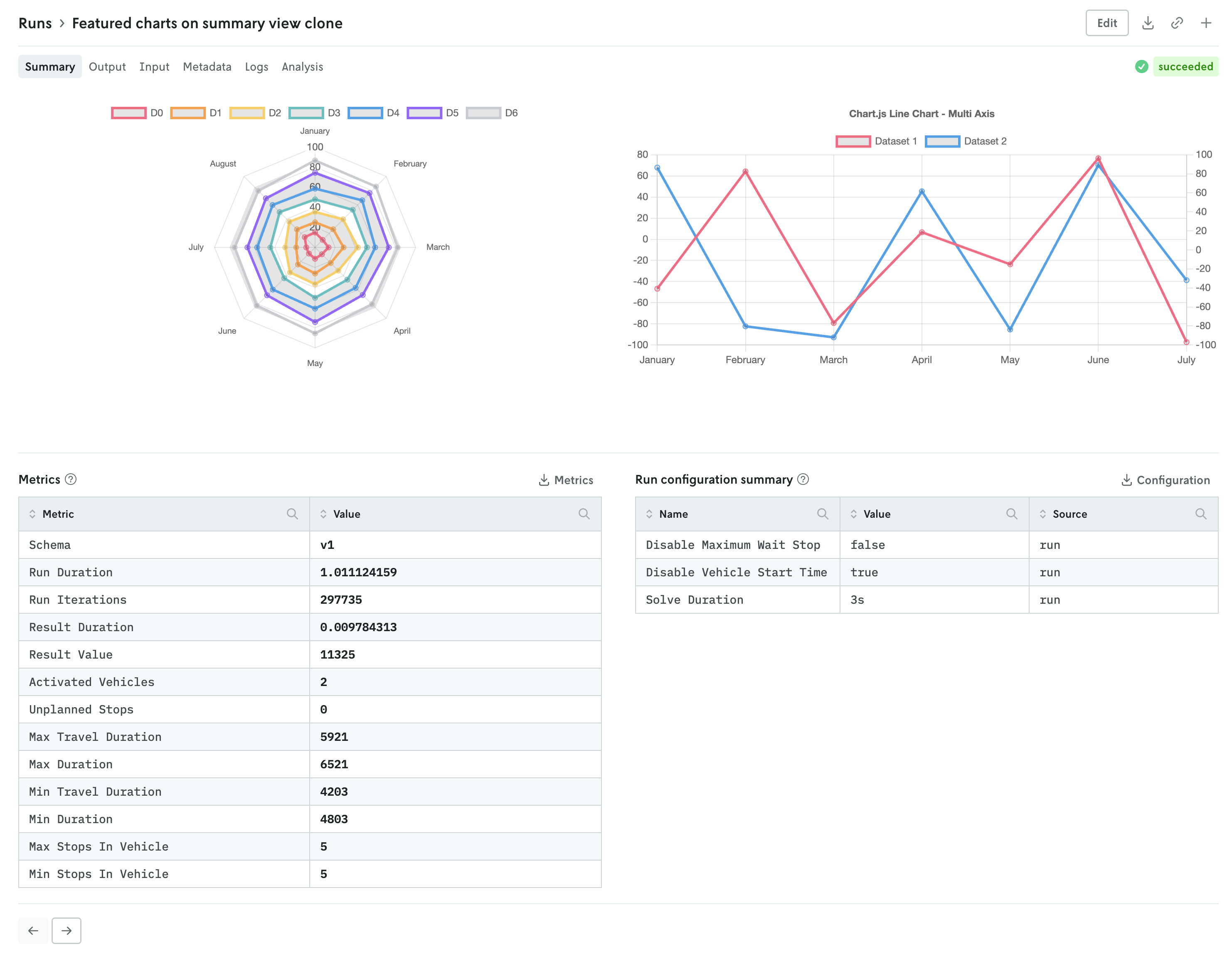

Add custom visuals to the run summary view

November 19, 2025

You can now set specific custom run visuals to appear on the summary view of the run details view. They will appear in a top row above the metrics and run options summary tables.

The custom visuals that appear on the summary view follow the same schema with the addition of a slot property. First, you must specify that the custom run visual should appear in the summary view with the "type": "summary-tab" designation, then you define the order with the slot property. An example of specifying a single custom visual is shown below:

You can specify up to three custom visuals for the run summary view. The slot property can have the values 1, 2, or 3:

1specifies that the visual will be on the left,2specifies that the visual will be on the right if two, in the middle if three, and3specifies that the visual will be on the right.

Note that the visuals will automatically adjust for more narrow screens (e.g. mobile devices).

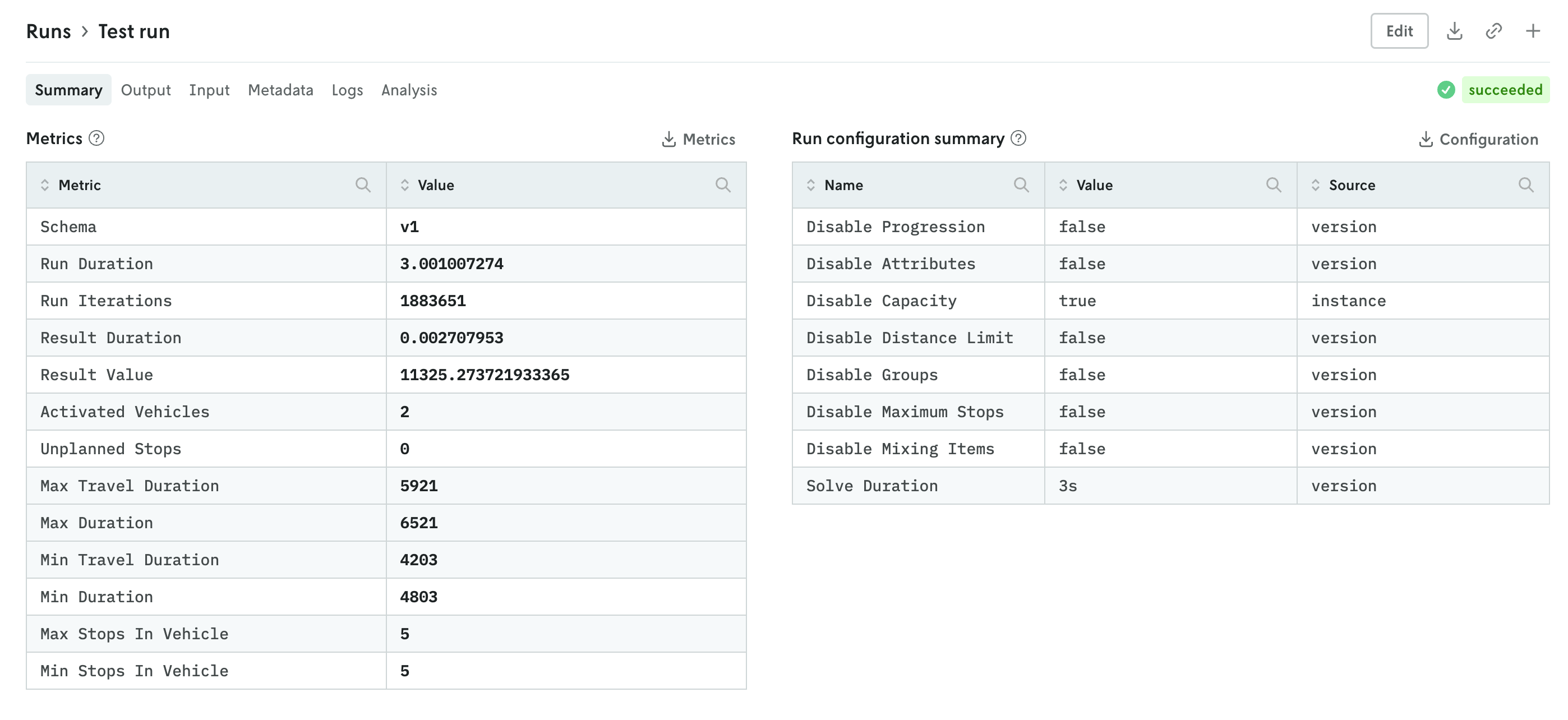

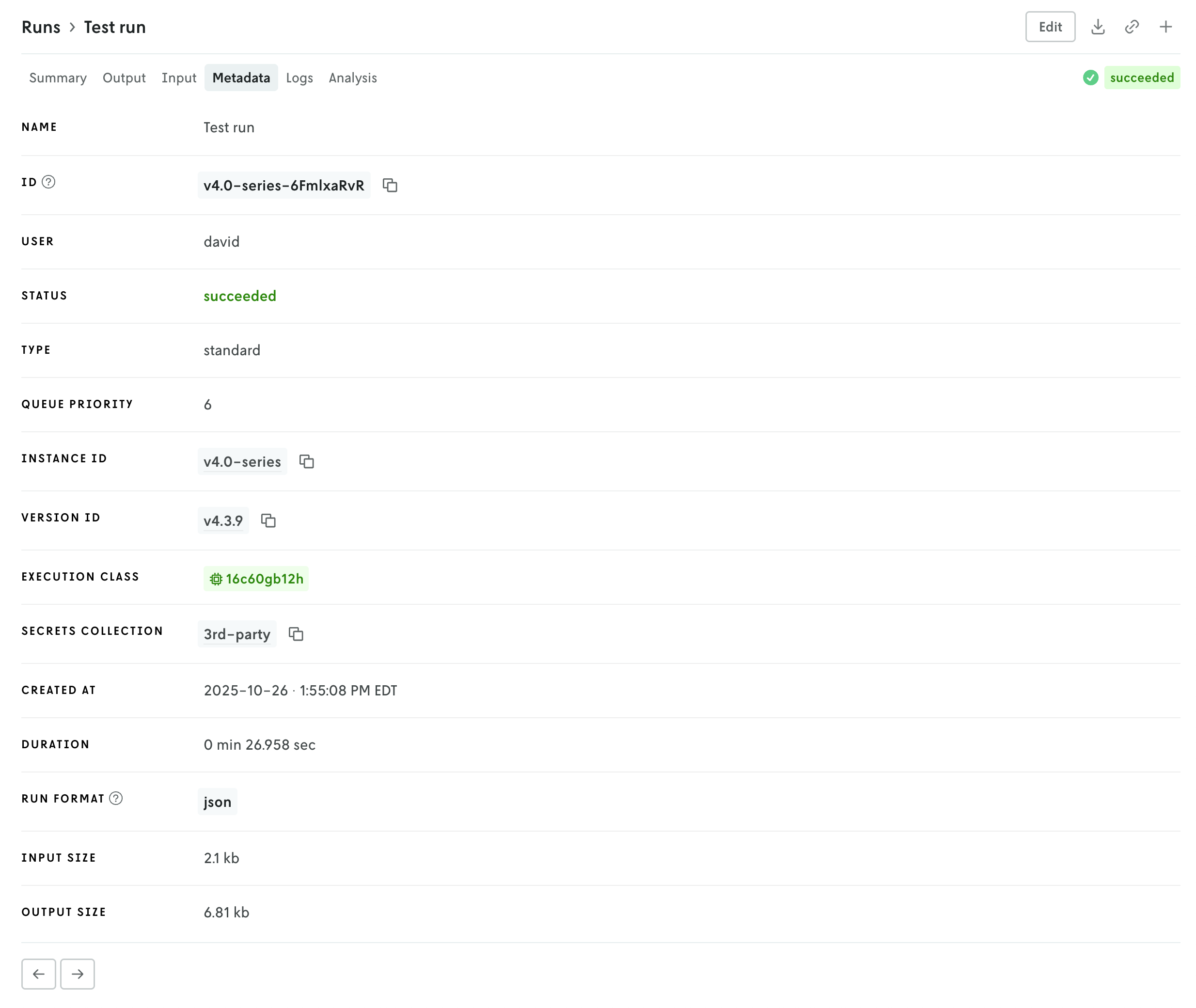

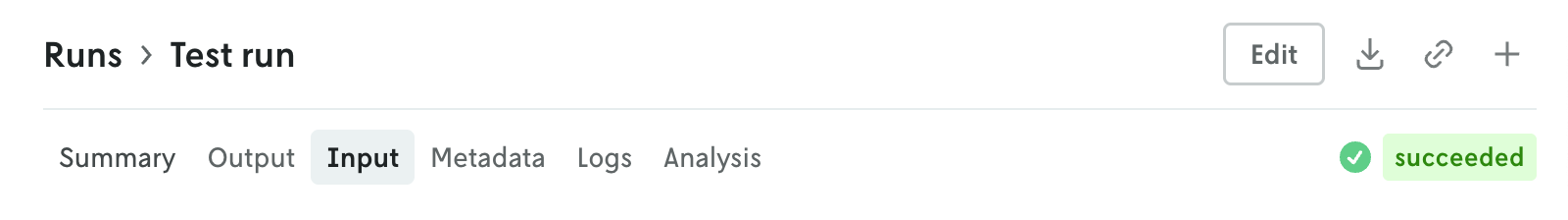

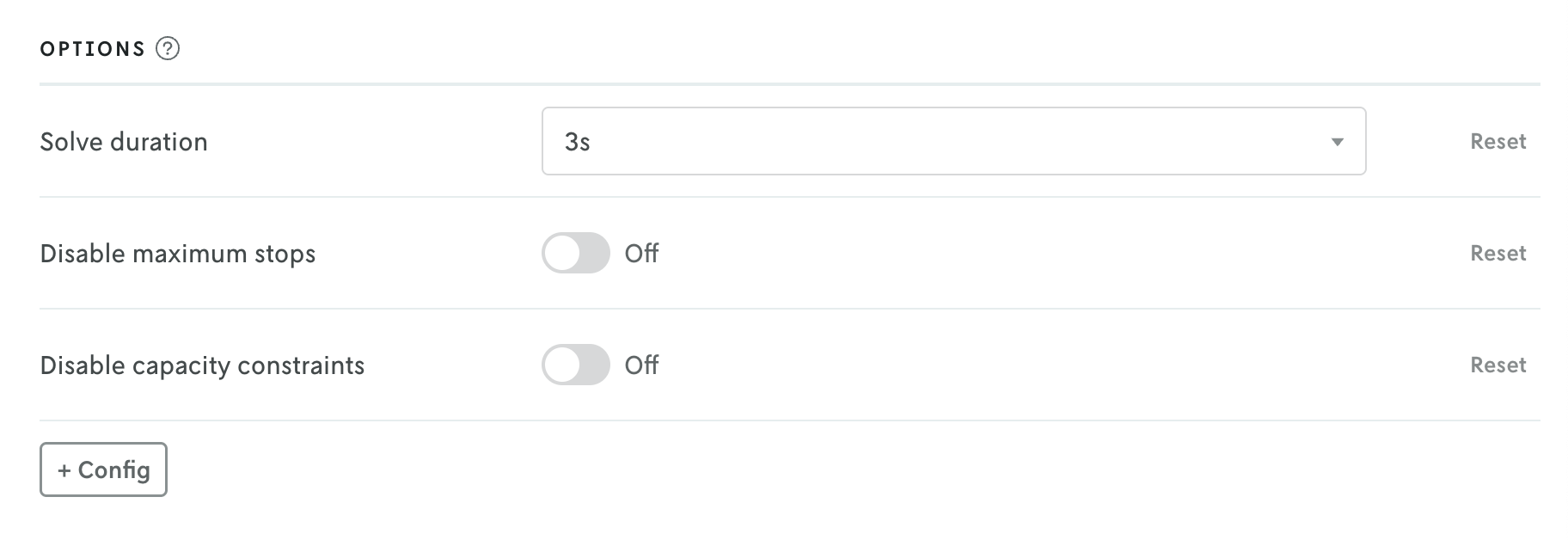

Updated run details views

November 17, 2025

The details view for runs was updated to better align with the data returned and viewing patterns. Summary tables of run metrics and options used are separated into their own view called Summary which is the default landing view when you click on a run. If you are documenting the objective function in your output, then a table summarizing the objective function for the run will be displayed as well.

You can further enhance the summary view by adding your own visuals. See the release note for adding custom visuals to the summary tab for more information.

The input and output tabs have been updated with more intuitive controls for managing table and raw data views and visualizations if they exist as well.

Additional views include a new Metadata view which displays a variety of information related to the run like duration, type, queuing, which version and instance were used, and so forth. The run status has been pulled out of the metadata view and displayed in the header so you can easily view the status of a run no matter which tab view is active.

Then there is a tab for viewing run logs, ensemble analysis details (if it’s an ensemble run), and series data charts if they exist (in the Analysis tab). You can navigate the different views by clicking the tabs in the header or you can use the left and right arrows in the page footer to navigate between the views.

And you can continue to edit the run, download the full input and output files, share views, or create a new run or clone the run you’re viewing using the action buttons in the header.

Set display names for managed options

November 12, 2025

When defining managed options for your model with the app manifest, you now have the ability to set a display name for the option. Before the actual option’s name would be the value that was displayed, but sometimes internal model options do not always translate to the most user-friendly names in a UI.

To define a display name for an option, you just add a display_name property in the ui definition block, like so:

Then, when the option is loaded in Console, the name for the option field will be the display_name value rather than the name value.

You can read more about the display_name option in the UI section of the managed options docs.